|

12/2/2023 0 Comments Tf32 vs praat/s3/static.nrc.nl/images/gn4/stripped/data75278401-3f0f3a.jpg)

Linear layers and components of Multi-Head Attention all do batched matrix-matrix multiplications. Transformers architecture includes 3 main groups of operations grouped below by compute-intensity. Software: pytorch-1.8-to-be + cuda-11.0 / transformers=4.3.0.dev0 Software Hardware: 2x TITAN RTX 24GB each + NVlink with 2 NVLinks ( NV2 in nvidia-smi topo -m) output_dir / tmp/ test-clm -per_device_train_batch_size 4 -max_steps 200 dataset_name wikitext -dataset_config_name wikitext-2-raw-v1 -do_train \ nproc_per_node 2 examples/ pytorch/ language-modeling/ run_clm. Here is the full benchmark code and outputs:Ĭopied # DDP w/ NVLink rm - r / tmp/ test-clm CUDA_VISIBLE_DEVICES= 0, 1 python - m torch. In the second benchmark we use NCCL_P2P_DISABLE=1 to tell the GPUs not to use NVLink. You can see that NVLink completes the training ~23% faster. Let’s compare the execution of a gpt2 language model training over a small sample of wikitext. The generation will depend on your GPU architecture. So the higher X you get in the report of NVX in the output of nvidia-smi topo -m the better.

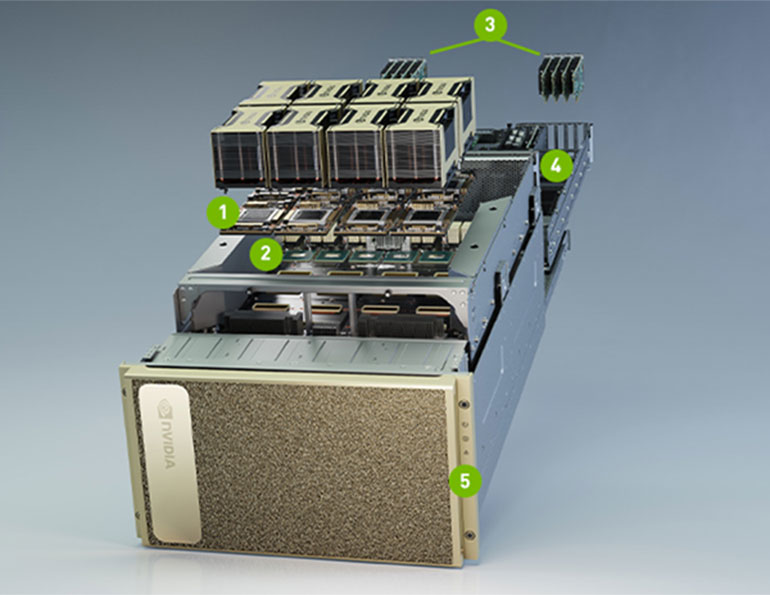

(Note that 3-Way and 4-Way SLI configurations are not supported.) Two RTX 3090 GPUs can be connected together for SLI using NVLink. Links provide 56.25 GB/sec bandwidth in each direction, and 112.5 GB/sec total bandwidthīetween two GPUs. With each link providing 14.0625 GB/sec bandwidth in each direction between two GPUs. GA102 GPUs utilize NVIDIA’s third-generation NVLink interface, which includes four x4 links, here is a quote from Nvidia Ampere GA102 GPU Architecture: NVLink is a wire-based serial multi-lane near-range communications link developed by Nvidia.Įach new generation provides a faster bandwidth, e.g. If the GPUs need to send messages to each other often, as in ZeRO-DP, then faster connectivity becomes super important to achieve faster training. If the GPUs need to sync rarely, as in DDP, the impact of a slower connection will be less significant. PHB).ĭepending on the type of scalability solution used, the connectivity speed could have a major or a minor impact. Some of these will make the communication between cards faster (e.g. So the first report NV2 tells us the GPUs are interconnected with 2 NVLinks, and the second report PHB we have a typical consumer-level PCIe+Bridge setup.Ĭheck what type of connectivity you have on your setup. NV # = Connection traversing a bonded set of # NVLinks PIX = Connection traversing at most a single PCIe bridge PXB = Connection traversing multiple PCIe bridges ( without traversing the PCIe Host Bridge) PHB = Connection traversing PCIe as well as a PCIe Host Bridge (typically the CPU) NODE = Connection traversing PCIe as well as the interconnect between PCIe Host Bridges within a NUMA node SYS = Connection traversing PCIe as well as the SMP interconnect between NUMA nodes (e.g., QPI/UPI) If the GPUs are on the same physical node, you can run: If you use multiple GPUs the way cards are inter-connected can have a huge impact on the total training time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed